Micro-sized cameras have great potential to spot problems in the human body and enable sensing for super-small robots, but past approaches captured fuzzy, distorted images with limited fields of view.

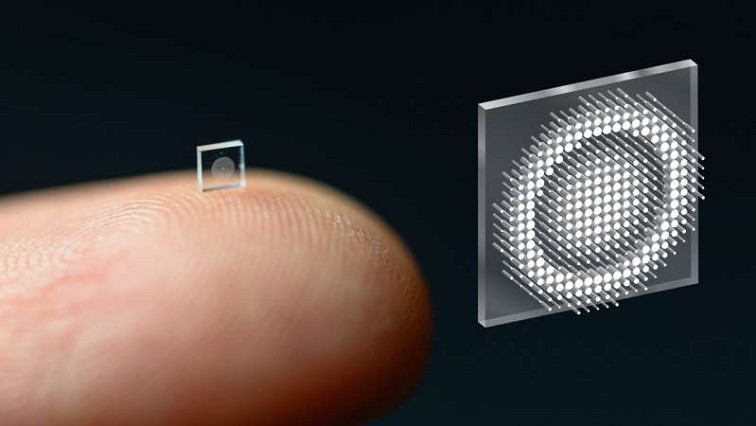

Now, researchers at Princeton University and the University of Washington have overcome these obstacles with an ultracompact camera the size of a coarse grain of salt. The new system can produce crisp, full-color images on par with a conventional compound camera lens 500,000 times larger in volume, the researchers reported in a paper published Nov. 29 in Nature Communications.

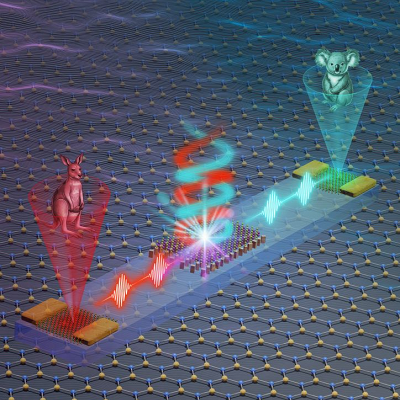

Enabled by a joint design of the camera’s hardware and computational processing, the system could enable minimally invasive endoscopy with medical robots to diagnose and treat diseases, and improve imaging for other robots with size and weight constraints. Arrays of thousands of such cameras could be used for full-scene sensing, turning surfaces into cameras.

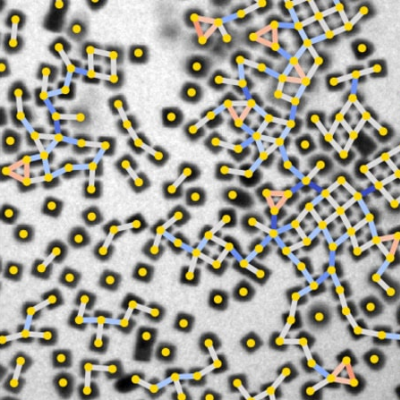

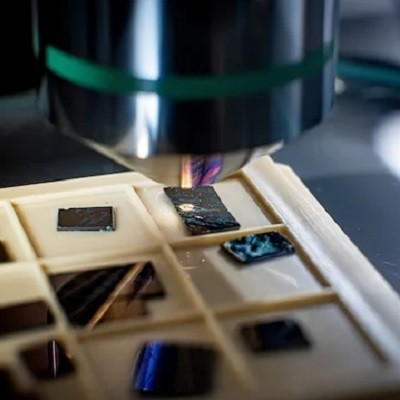

While a traditional camera uses a series of curved glass or plastic lenses to bend light rays into focus, the new optical system relies on a technology called a metasurface, which can be produced much like a computer chip. Just half a millimeter wide, the metasurface is studded with 1.6 million cylindrical posts, each roughly the size of the human immunodeficiency virus (HIV).

Each post has a unique geometry, and functions like an optical antenna. Varying the design of each post is necessary to correctly shape the entire optical wavefront. With the help of machine learning-based algorithms, the posts’ interactions with light combine to produce the highest-quality images and widest field of view for a full-color metasurface camera developed to date.

A key innovation in the camera’s creation was the integrated design of the optical surface and the signal processing algorithms that produce the image. This boosted the camera’s performance in natural light conditions, in contrast to previous metasurface cameras that required the pure laser light of a laboratory or other ideal conditions to produce high-quality images, said Felix Heide, the study’s senior author and an assistant professor of computer science at Princeton.

The researchers compared images produced with their system to the results of previous metasurface cameras, as well as images captured by a conventional compound optic that uses a series of six refractive lenses. Aside from a bit of blurring at the edges of the frame, the nano-sized camera’s images were comparable to those of the traditional lens setup, which is more than 500,000 times larger in volume.

Other ultracompact metasurface lenses have suffered from major image distortions, small fields of view, and limited ability to capture the full spectrum of visible light—referred to as RGB imaging because it combines red, green and blue to produce different hues.

“It’s been a challenge to design and configure these little microstructures to do what you want,” said Ethan Tseng, a computer science Ph.D. student at Princeton who co-led the study. “For this specific task of capturing large field of view RGB images, it’s challenging because there are millions of these little microstructures, and it’s not clear how to design them in an optimal way.”

Previous micro-sized cameras (left) captured fuzzy, distorted images with limited fields of view. A new system called neural nano-optics (right) can produce crisp, full-color images on par with a conventional compound camera lens.

Co-lead author Shane Colburn tackled this challenge by creating a computational simulator to automate testing of different nano-antenna configurations. Because of the number of antennas and the complexity of their interactions with light, this type of simulation can use “massive amounts of memory and time,” said Colburn. He developed a model to efficiently approximate the metasurfaces’ image production capabilities with sufficient accuracy.

Colburn, who conducted the work as a Ph.D. student at the University of Washington Department of Electrical & Computer Engineering (UW ECE), where he is now an affiliate assistant professor. He also directs system design at Tunoptix, a Seattle-based company that is commercializing metasurface imaging technologies. Tunoptix was cofounded by Colburn’s graduate adviser Arka Majumdar, an associate professor at the University of Washington in the ECE and physics departments and a coauthor of the study.

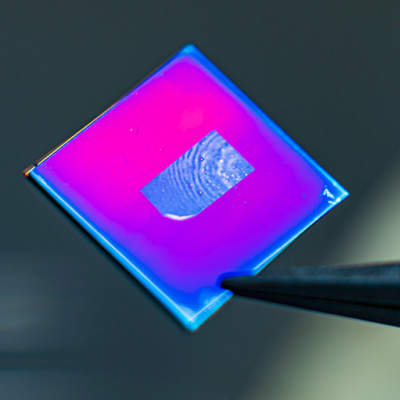

Coauthor James Whitehead, a Ph.D. student at UW ECE, fabricated the metasurfaces, which are based on silicon nitride, a glass-like material that is compatible with standard semiconductor manufacturing methods used for computer chips—meaning that a given metasurface design could be easily mass-produced at lower cost than the lenses in conventional cameras.

“Although the approach to optical design is not new, this is the first system that uses a surface optical technology in the front end and neural-based processing in the back,” said Joseph Mait, a consultant at Mait-Optik and a former senior researcher and chief scientist at the U.S. Army Research Laboratory.

“The significance of the published work is completing the Herculean task to jointly design the size, shape and location of the metasurface’s million features and the parameters of the post-detection processing to achieve the desired imaging performance,” added Mait, who was not involved in the study.

Heide and his colleagues are now working to add more computational abilities to the camera itself. Beyond optimizing image quality, they would like to add capabilities for object detection and other sensing modalities relevant for medicine and robotics.

Heide also envisions using ultracompact imagers to create “surfaces as sensors.” “We could turn individual surfaces into cameras that have ultra-high resolution, so you wouldn’t need three cameras on the back of your phone anymore, but the whole back of your phone would become one giant camera. We can think of completely different ways to build devices in the future,” he said.

Read the original article on University of Washington.